Berant’s interest in natural language processing began with a linguistics course he took at the Open University during his military service. “My head exploded,” he recalls. “I thought this was amazing.” He wanted to pursue the topic, but he also recognized that he had some “exact science tendencies,” so after his service was finished, he enrolled at Tel Aviv University in a joint computer science and linguistics program. Four years later, Berant started his PhD as an Azrieli Fellow, eventually zeroing in on a problem in natural language processing called textual entailment.

Given two statements, can you infer one from the other? To humans, it’s clear that if Amazon acquires MGM Studios, that means that Amazon owns MGM Studios. But these leaps are trickier for a computer to make. After all, if you acquire a second language, that doesn’t mean that you own it. Berant’s thesis focused on using the underlying structures of language — properties like transitivity, which means that if A implies B and B implies C, A must imply C — to help computers make better inferences. Despite all the progress over the past decade, textual entailment is a problem that researchers are still grappling with, Berant says: “It kind of encapsulates a lot of the things that you need to do in order to understand language.”

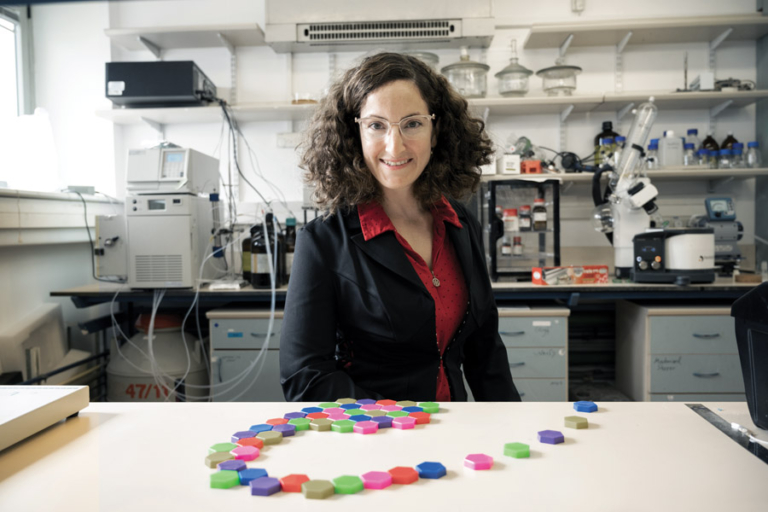

After completing his PhD, Berant headed to Stanford University in California for a postdoctoral fellowship. He began working with computer scientists Percy Liang and Christopher Manning and shifted his focus to semantic parsing, which is the task of taking a single sentence of natural language and translating it into a logical form that a computer can understand and act upon. If you tell the virtual assistant on your phone, “Book me a ticket on the next flight to New York, but only a morning flight, with no connections,” that’s a very specific set of instructions that the software has to understand, no matter how you phrase it.

The first attempts to build computer systems that could understand natural language, starting in the 1960s, relied on rules. In a sufficiently narrow domain, you could tell the computer everything it needed to know in order to answer questions. But that approach has limits. “There’s a lot of world out there,” as Liang once put it, “and it’s messy too.”